Therefore, the new value must somehow be propagated to the copy of variable “a” in core 2. However, if core 1 performs a store instruction (step 3) that modifies variable “a,” and core 2 subsequently performs a load instruction from that variable, the read instruction must see the new value. If core 1 or core 2 performs a read instruction to read a value in variable “a,” correct execution occurs. The variable was read from main memory (or an L2 cache) that both processors share, so both copies of the variable contain the same values.

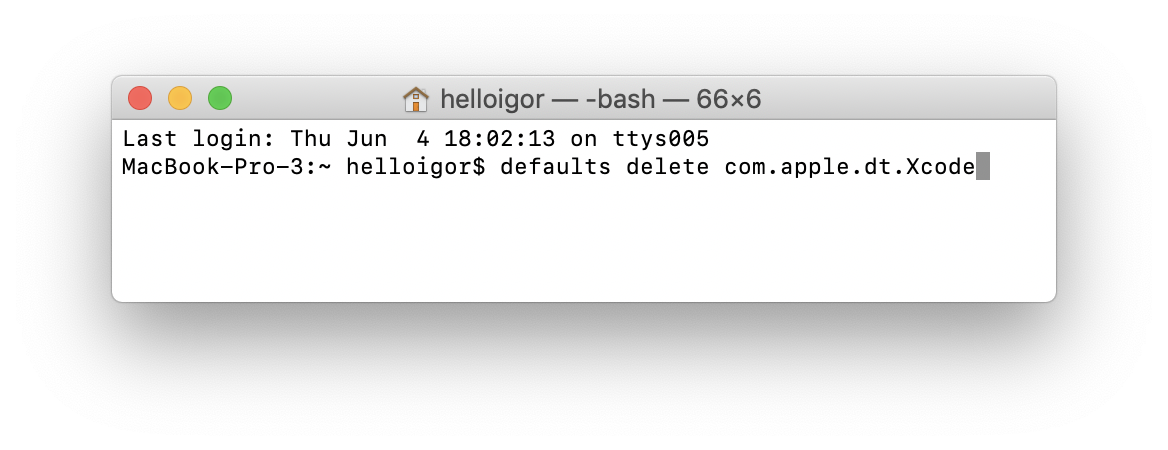

Figure 3.2 shows the case where core 1 and core 2 both have a copy of variable “a” in their caches (steps 1 and 2). Incorrect execution could occur if two or more copies of a given cache block exist, in two processors’ caches, and one of these blocks is modified. Using five mesh networks gives the Tile architecture a per core bandwidth of up to 1.28 Tbps (terabits per second).ĭespite these efforts, the question remains which type of interconnect is best suited for multicore processors? Is a bus-based approach better than an interconnection network? Or is a hybrid like the mesh network a better optica?Ĭache coherency is a situation where multiple processor cores share the same memory hierarchy, but have their own L1 data and instruction caches. Intel is developing their Quick path interconnect, which is a 20-bit wide bus running between 4.8 and 6.4 GHz AMD’s new Hyper Transport 3.0 is a 32-bit wide bus and runs at 5.2 GHz. A faster network means a lower latency in inter-core communication and memory transactions. Redesigning the interconnection network between cores is acrrently a major focus of chip manufacturers. This is made fasterby using the Hyper Transport connection, but still has more overhead than Intel’s model.Įxtra memory will be useless if the amount of time required for memory requests to be processed does not improve as well. AMD’s Athlon 64 X2, however, has to monitor cache coherence in both L1 and L2 caches. Having a shared L2 cache also has the added benefit that a coherence protocol does not need to be set for this level. Intel’s Core 2 Duo tries to speed up cache coherence by being able to query the second core’s L1 cache and the shared L2 cache simultaneously. The directory knows when a block needs to be updated or invalidated. In this scheme, a directory is used which holds information about which memory locations are being shared in multiple caches, and which are used exclusively by one core’s cache. This contrast swith snooping, which is not scalable.

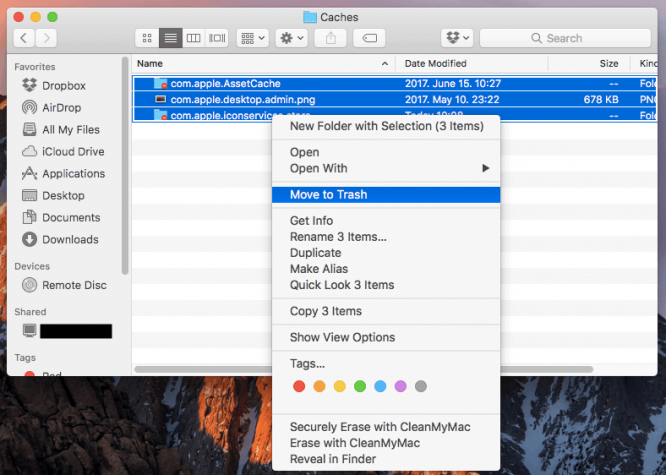

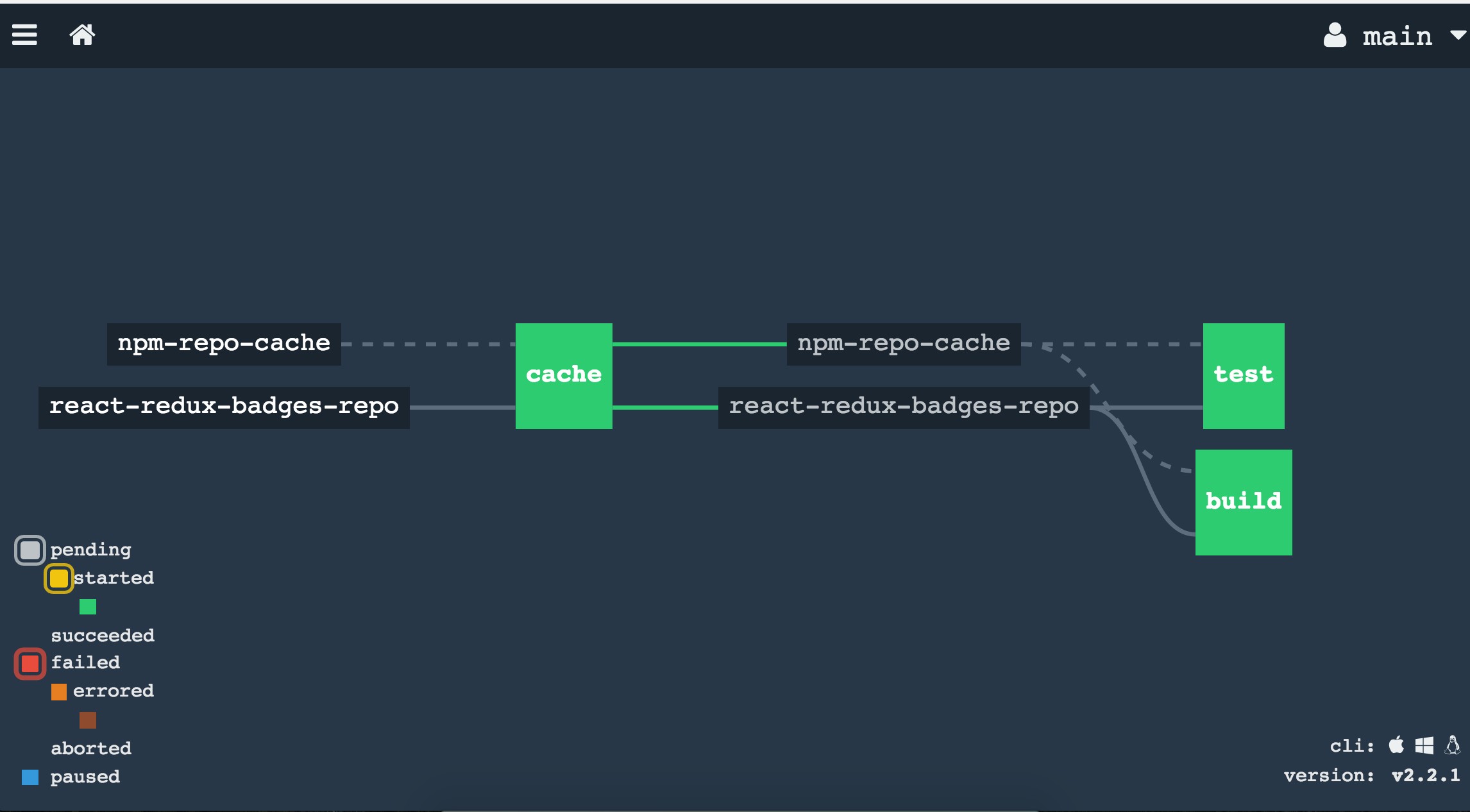

The directory-based protocol can be used on an arbitrary network and is, therefore, scalable to many processors or cores. #PRIVATE CACHE TYPE UPDATE#The snooping protocol only works with a bus-based system, and uses a number of states to determine whether or not it needs to update cache entries, and whether it has control over writing to the block. In general there are two schemes for cache coherence a snooping protocol and a directory-based protocol. If this coherence policy was not in place, the wrong data would be read and invalid results would be produced, possibly crashing the program or the entire computer. This cache miss forces the second core’s cache entry to be updated. When the second core attempts to read that value from its cache, it will not have the most recent version unless its cache entry is invalidated and a cache miss occurs. For example, imagine a dual-core processor where each core brought a block of memory into its private cache, and then one core writes a value to a specific location. Since each core has its own cache, the copy of the data in that cache may not always be the most up-to-date version.

Peng Zhang, in Advanced Industrial Control Technology, 2010 (b) Cache coherenceĬache coherence is a concern in a multicore environment because of distributed L1 and L2 caches.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed